|

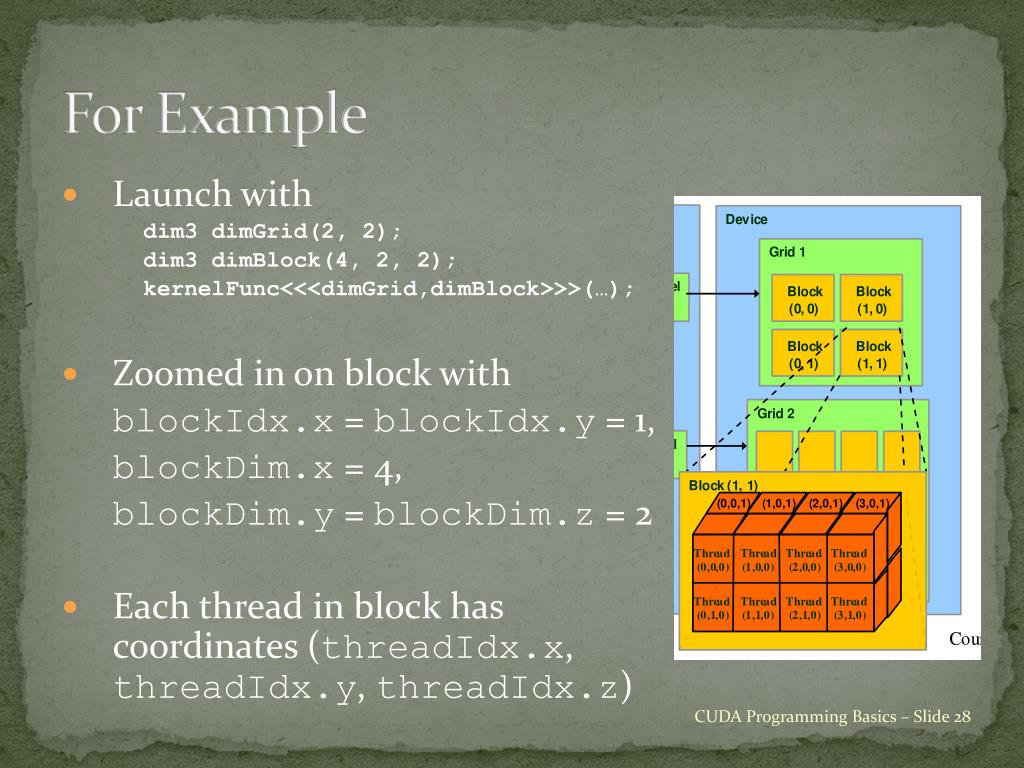

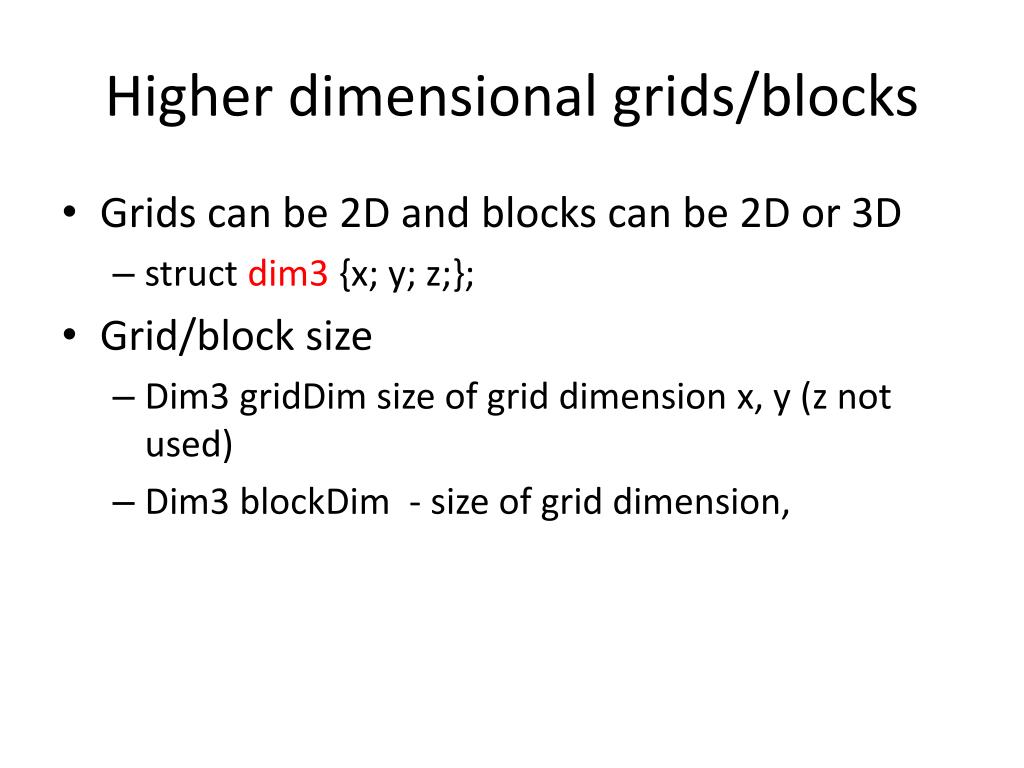

The output matrix is P with the same size. Two input matrices of size Width x Width are M and N. In this example, we will do the Square Matrix Multiplication. This feature in CUDA architecture enable us to create two-dimensional or even three-dimensional thread hierarchy so that solving two or three-dimensional problems becomes easier and more efficient. As we know, threads can be organized into multi-dimensional block and blocks can also be organized into multi-dimensional grid. When you launch your kernel you specify the grid and block dimensions, and you're the one who has to enforce the mapping to your data inside your kernel.Starting from this example, we will look at the how to solve problem in two-dimensional domain using two-dimensional grid and block. This mapping is pretty central to any kernel launch, and you're the one who determines how it should be done. I'm not sure where that would be relevant, but it all depends on your application and how you map your threads to your data. Hence in calculating threadIdx.x + blockIdx.x*blockDim.x, you would have values within the range defined by: [0, 128) + 128 * [1, 10), which would mean your tid values would range from Then in your kernel had threadIdx.x + blockIdx.x*blockDim.x you would effectively have: So if you launch a kernel with parameters dim3 block_dim(128,1,1)

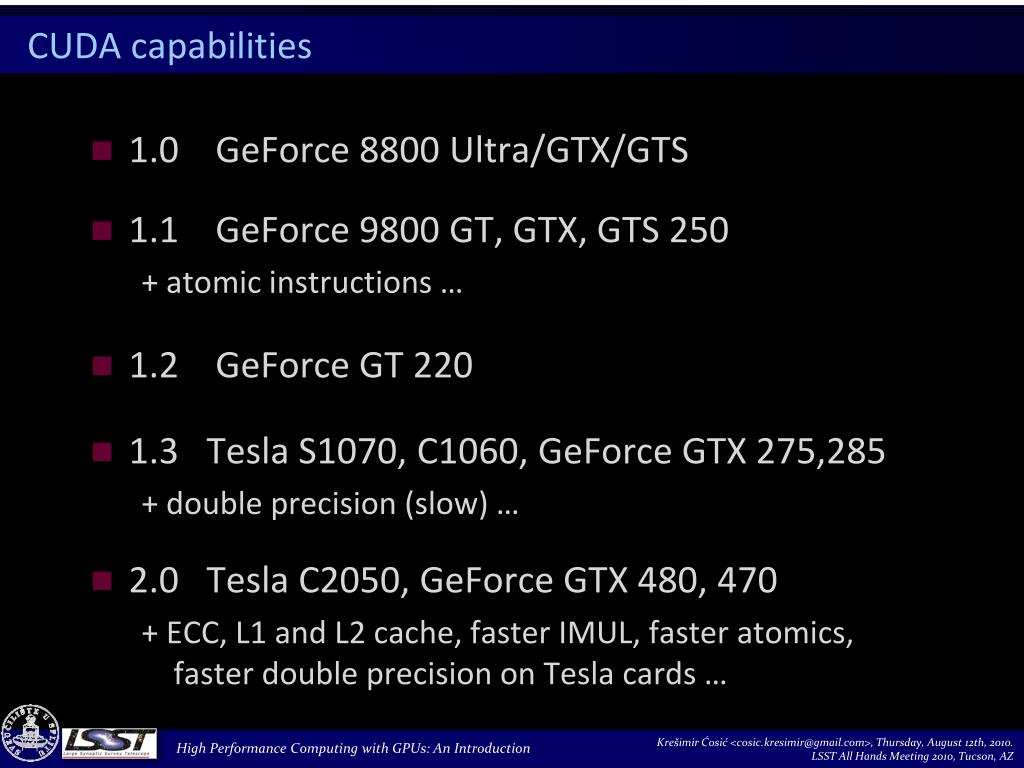

This is because your blockDim.x would be the size of each block, and your gridDim.x would be the total number of blocks. In that case, your tid+=blockDim.x * gridDim.x line would in effect be the unique index of each thread within your grid. This is especially revlevant if you're working with 1D arrays. With that said, it's common to only use the x-dimension of the blocks and grids, which is what it looks like the code in your question is doing. In turn, each block is a 3-dimensional cube of threads. Each of its elements is a block, such that a grid declared as dim3 grid(10, 10, 2) would have 10*10*2 total blocks. You seem to be a bit confused about the thread hierachy that CUDA has in a nutshell, for a kernel there will be 1 grid, (which I always visualize as a 3-dimensional cube). ThreadIdx: This variable contains the thread index within the block. GridDim: This variable contains the dimensions of the grid.īlockIdx: This variable contains the block index within the grid.īlockDim: This variable and contains the dimensions of the block. Paraphrased from the CUDA Programming Guide: The general topic of grid-striding loops is covered in some detail here. It would be 2 hours well spent, if you want to understand these concepts better. Concepts will be illustrated using real code Optimization techniques such as global memory optimization, and GPU Computing using CUDA C – Advanced 1 (2010) First level.Illustrated with walkthroughs of code samples. To the basics of GPU computing using CUDA C. GPU Computing using CUDA C – An Introduction (2010) An introduction.You might want to consider taking a couple of the introductory CUDA webinars available on the NVIDIA webinar page.

This topic, sometimes called a "grid-striding loop", is further discussed in this blog article. In this case, after processing one loop iteration, each thread must then move to the next unprocessed location, which is given by tid+=blockDim.x*gridDim.x In effect, the entire grid of threads is jumping through the 1-D array of data, a grid-width at a time. In particular, when the total threads in the x-dimension ( gridDim.x*blockDim.x) is less than the size of the array I wish to process, then it's common practice to create a loop and have the grid of threads move through the entire array. In the CUDA documentation, these variables are defined here It's common practice when handling 1-D data to only create 1-D blocks and grids.

blockDim.x * gridDim.x gives the number of threads in a grid (in the x direction, in this case)īlock and grid variables can be 1, 2, or 3 dimensional.gridDim.x,y,z gives the number of blocks in a grid, in the.blockDim.x,y,z gives the number of threads in a block, in the.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed